What Makes a Good Soccer Prediction Model

A good soccer prediction model shows probabilties, all probabilities not only the higest one. And if it cannot explain its signals clearly, it is not as useful as it looks.

There are 4 important things to have in mind. First, it works before kickoff, not after. Second, it treats uncertainty honestly. Third, it is validated on a large sample. Fourth, it shows its real accuracy and benchmark.

If you search for a soccer prediction model, you will find a lot of pages that promise confidence and wins, but very few can (or want to) explain what the model is actually doing. The question should not be “which model predicts winners?” The question should be “can I trust this model’s predictions?”

Quick answer

A good soccer prediction model should:

- use pre-match data only

- output probabilities, ALL PROBABILITIES

- account for draws instead of pretending they do not exist

- be tested on a large match sample

- explain its signals in plain language

- stay honest about what it can and cannot do

That is the standard PredictApp tries to meet.

Why most soccer prediction content feels weak

Most prediction content is built backward. It starts with the conclusion and then try to invent a story around it.

Team A is “in form.” Team B “looks tired.” The TV or social media says the matchup is obvious, they sound confident. They also tell you almost nothing about how the conclusion was reached.

A real soccer prediction model should do the opposite. It should begin with structured inputs, turn those inputs into probabilities, and then help the reader understand what those probabilities mean.

That difference matters because soccer has a lot of uncertainty. Goals are a strange event. Draws are common. Small events change everything. A model must be able to handle all the context together.

The first test: does it use pre-match information only?

This is the cleanest filter.

A trustworthy soccer prediction model should evaluate the match with information available before kickoff. Team strength. Recent performance. Lineup context. Market context. League environment. Injuries if they are known. Maybe travel or rest if they are relevant.

What it should not do is smuggle in information that only becomes obvious after the match begins.

That sounds basic, but it is where a lot of analysis does this. Introducing data leaks is one of the biggest reasons why models test super well but they are in fact very bad at predicting. Every “model” should be able to tell you its accuracy and have it public.

PredictApp’s models are very careful witht he designs to be sure we only use pre-match information. The point is not to have a great backtesting model. The point is to help users understand the game more clearly before it starts.

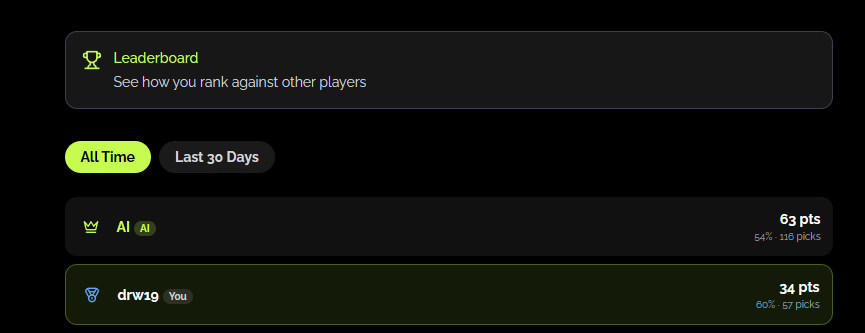

You can find how PredictApp’s model is performing by visiting the section People vs Dex in our app:

The second test: does it model draws honestly?

This is where soccer separates itself from a lot of other sports.

Draws are not edge cases, in the data behind PredictApp’s soccer work they happen in about 25% of the matches (as a reference, away wins happen around 28%). So, draws happen roughly one out of every four games.They are a very big part of the sport.

Meaning that if a model treats draws like a residual or something that just happens, then its useless.

This is also where many systems get bad. They focus on home win versus away win because it is simpler, cleaner, and easier to market. The result is a prediction layer that feels decisive but is less real to how soccer actually behaves.

A better soccer prediction model accepts that the sport is messy. It does not reduce the match to a 2 outcomes. It gives the draw real weight.

The third test: are the outputs probabilities or just opinions with numbers on top?

A useful model output tells you how likely each outcome is. It does not hand you a pick.

That difference is bigger than it sounds.

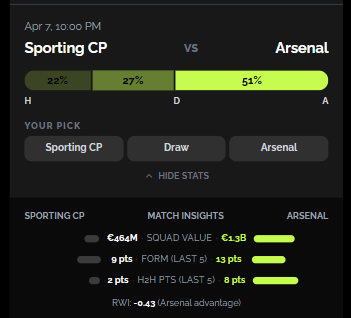

A pick tells you what someone thinks its a certain result. A probability tells you how the model sees the range of outcomes. That is much more useful because it lets you compare the model signal with your own intuition, with market prices, or with the rest of the match context. Thats why in PredictApp we give you predictions together with match context:

What is a soccer prediction model, really?

At the simplest level, a soccer prediction model takes pre-match inputs and turns them into probabilities for home win, draw, and away win.

The best versions do more than that. They also expose the reasoning layer behind the output. That might include team strength, lineup context, expected goal difference, recent results, recent meetings, team value, and league-specific conditions.

That matters because most readers do not need a full machine learning lecture. They need a trustworthy way to understand why the model sees the match the way it does.

The fourth test: can the model explain its own signals?

A model does not need to expose every line of math to be useful, but it should be able to explain the major signals behind the output. Otherwise the user can not take informed decisions and is not learning anything.

For soccer, useful signals often include:

- team attacking and defensive strength

- lineup quality and likely starters

- recent results

- home advantage

- league scoring environment

- market expectations

PredictApp uses RWI, the Roiz Walss Index, as one of the structured signals in this system. It summarizes team venue strength, lineup context, home advantage, and league environment into a clean pre-match signal before kickoff. It is interpreted as the expected goal difference before kickoff. If you want the deeper breakdown, read our guide to what RWI means before a match. [link]

The fifth test: was it validated on enough matches?

Small-sample bragging is easy.

The real test is whether the model holds up across seasons, leagues, and changing conditions.

That is why scale matters. PredictApp’s soccer foundation work was validated on 34,042 matches across 14 leagues. That does not mean the model is magic. It means the claims are grounded in enough data to matter.

A real model should be able to say:

- how it was tested

- what sample it was tested on

- which metrics matter

- where it is strong

- where it is still limited

That last part matters. Honest limitations are a trust signal.

The sixth test: does it stay honest about the benchmark?

A lot of prediction products want to imply they beat the market odds.

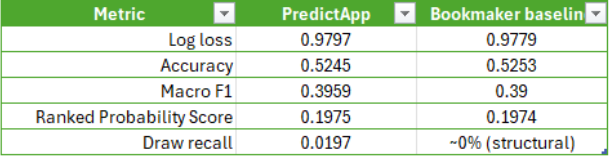

The more disciplined standard is this: compare the model with bookmaker baselines honestly, then explain what is different. In PredictApp’s case, the fair public claim is not “we beat bookmakers.” The fair claim is closer to “we operate in a similar accuracy zone, but we are calibrated differently and we actively try to model draws.”

That may sound less flashy, but it is the truth. PredictApp’s model is at the same level of accuracy than the bookmaker odds, we just think different than the bookmakers. We are trying to give our users the best information, bookmakers are trying to win money.

If a model can not show you their benchmark and how they do compared to it then its probably fake.

What good soccer model content should help you understand

If a page is built around a solid soccer prediction model, you should leave with better context.

Not “who will win?”

But:

- how much uncertainty is in this match?

- how live is the draw?

- is there a big signal against the most expected outcome?

- what does the pre-match context actually say?

- what is the combined probability of the less probable outcomes?

That is the job. Better framing. Better context. Better decisions.

How PredictApp approaches this

PredictApp is not trying to tell you who will win. We are trying to help you understand the games and the context better so you can take better decisions.

Our information is model-backed match analysis for people who want a smarter read before kickoff. The product combines probabilities, matchup context, and structured signals like RWI so users can understand what the model sees. The point is not just to show a top outcome. It is to help the user interpret the whole distribution in a useful way.

Free users can access RWI, matchup stats and context, so they see what PredictApp thinks.

What accuracy should you realistically expect?

If a page claims near-perfect performance, be skeptical. In real soccer prediction work, even strong systems live in a much narrower band than casual marketing pages imply. As a benchmark, in PredictApp’s data we found that bookmakers probabilties are around 52% accurate in predicting the outcome. Here is how our model compares to the bookmakers odds:

Final thought

A good soccer prediction model is not the one that gives you an outcome. It is the one that gives you the distribution and the context.

Soccer has noise. Soccer has draws. Soccer has matches where the most likely outcome still does not happen. A useful model respects that and helps you think more clearly.

That is a much better standard than pretending certainty exists.

FAQ

A trustworthy soccer prediction model uses pre-match data, outputs calibrated probabilities, models draws, is validated on a large sample, and shows its benchmark and results.

Yes. Draws are a meaningful part of soccer and should be modeled directly, not ignored.

No. A model gives structured probabilities and context. A picks page usually pushes conclusions without much transparency.

Look for pre-match discipline, evidence of validation, honest probability outputs, clear explanations of what the numbers mean, and the models real accuracy.

See it in practice

Create a free account at PredictApp to see model-backed match analysis before kickoff.

Explore predictions by league

You can also go straight into PredictApp’s landing pages for the leagues you care about: